Facebook's facial verification software reaches 'near human-level performance'

The program can match photos of the same individual with 97.25 per cent accuracy - just a shade below human performance of 97.5 per cent

Facebook has announced that the latest iteration of its facial verification software has reached near “human-level performance”.

The social network’s deep learning algorithms (computer programs that can learn to recognise patterns from large amounts of data) can now say when two images show the same individual with 97.25 per cent accuracy. By comparison, humans get this task right 97.5 per cent of the time.

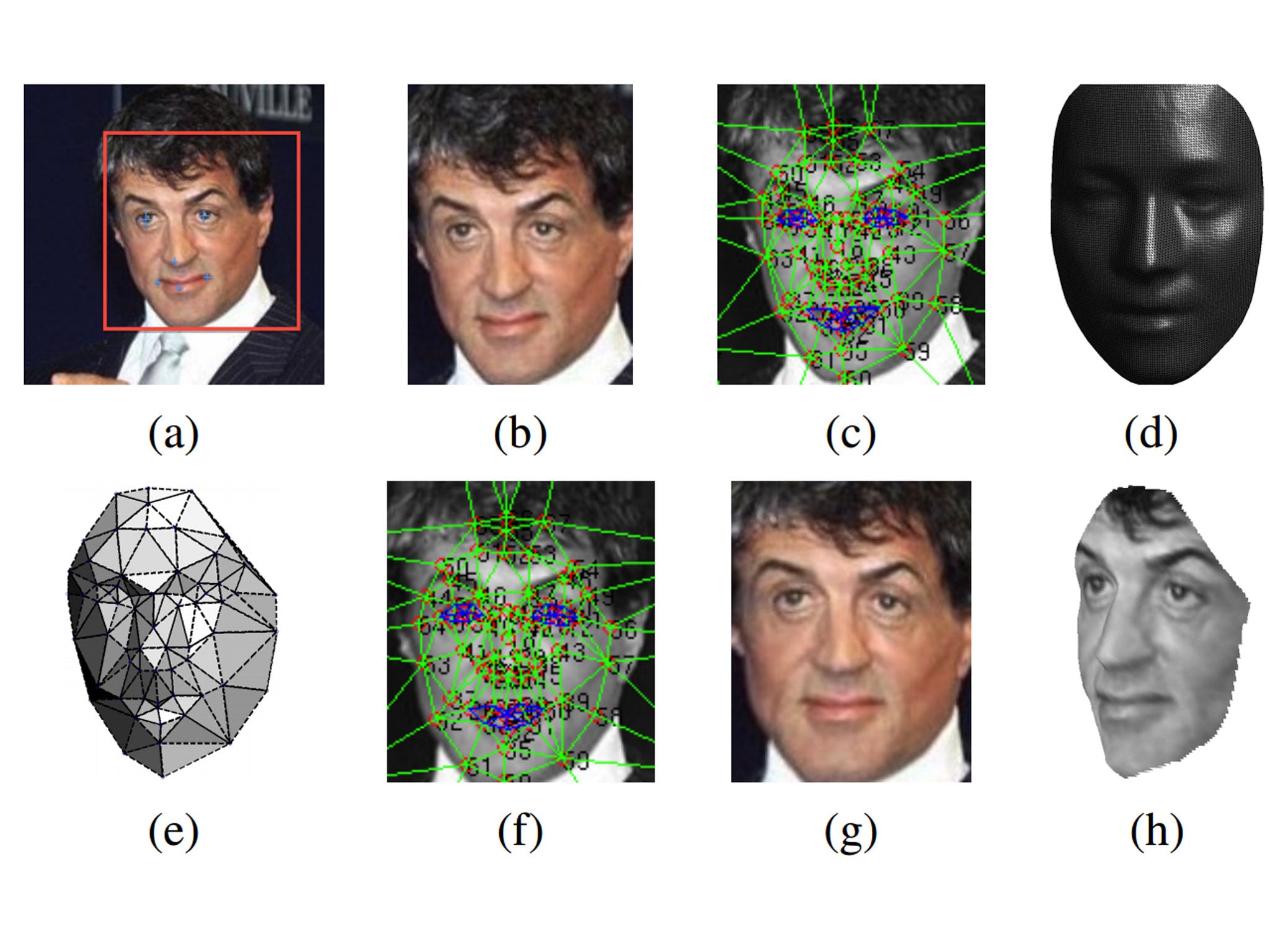

The new software is called DeepFace, and analyses photos regardless of lighting, expression or whether or not the individual is facing the camera. It does this by creating a 3D map of the facial features in the picture and "recognises" the individual by calculating various numerical descriptions of the face – eg, the ratio between eyebrows and mouth and the distance between nose and ears.

The screen shot above shows the technology in action. Beginning with a snapshot of an individual face (a), the software processes this into a wireframe model (e) and finally a 3D recreation of the face (h).

DeepFace is technically a facial verification system (matching images to images) rather than a facial recognition program (matching images to a name), but many of the underlying techniques are transferrable between the two goals.

Facebook says that it used a pool of 4.4 million labelled faces from 4,030 different people on its network to load the system. The software is not currently being introduced to Facebook itself, but is simply being presented to garner feedback from other researchers.

The social network first introduced its facial recognition software back in 2010 to American users before bringing it worldwide in 2011. In 2012 the EU forced Facebook to drop the functionality in Europe and delete all the facial templates it had collected from users. Facial recognition remains unavailable for users in the UK.

Subscribe to Independent Premium to bookmark this article

Want to bookmark your favourite articles and stories to read or reference later? Start your Independent Premium subscription today.

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies