Dual camera smartphones – the missing link that will bring augmented reality into the mainstream

Apple's annoucement that the new iPhone 7 will feature a dual camera signals a future for augmented reality that exists past catching them all

Smartphones boasting “dual cameras” are becoming more common, and news that they will feature on the just-announced iPhone 7 Plus, indicates their arrival into the mainstream. However, while dual cameras may stem from efforts to improve picture quality, it has the potential to lead us down much more interesting paths: the real story may be that Apple is using dual cameras to position itself for the augmented reality world ushered in by the Pokémon Go phenomenon.

Augmented Reality, or AR, has for years been a solution in search of a problem. In the last few months, Pokémon Go has been the app to take augmented reality into the mainstream after years in the wilderness, and with Apple’s Watch now able to run the game directly, the company surely hopes it has found the answer. The new dual camera system in the iPhone 7 Plus may just be the platform on which to expand fully into AR.

Manufacturers present dual cameras as a means to help smartphone cameras behave more like professional digital single lens reflex (DSLR) cameras – the digital derivatives of the camera design, popular since the mid-20th century. The main reason for the rise in dual cameras is physical necessity. It’s not possible to attach a professional-grade zoom lens to a mobile phone – today’s smartphones are just too tiny. Alternatively, creating camera zoom features in software quickly runs into limits of picture quality. But as lens hardware falls in cost, adding another physical camera is now feasible, with software switching between the two and interpolating images from both cameras.

Twin cameras at different focal lengths, for example one wide and one telephoto, offer several benefits. The telephoto lens can be used to compensate for the distortion common in wide angle lenses by blending the flattening effect of a long lens. Having two slightly different types of sensors gives better dynamic range, the range of light and dark in scenes within which a camera can capture detail. Greater dynamic range and information about the scene give sharper details and richer colour. Relying on real optical lenses rather than software to zoom reduces the digital noise that makes images grainy. And given less noisy images with more image data, it’s possible to improve the quality of software zoom.

Images showing depth of field with in-focus and out-of-focus areas are very difficult to achieve with small sensor smartphone cameras.

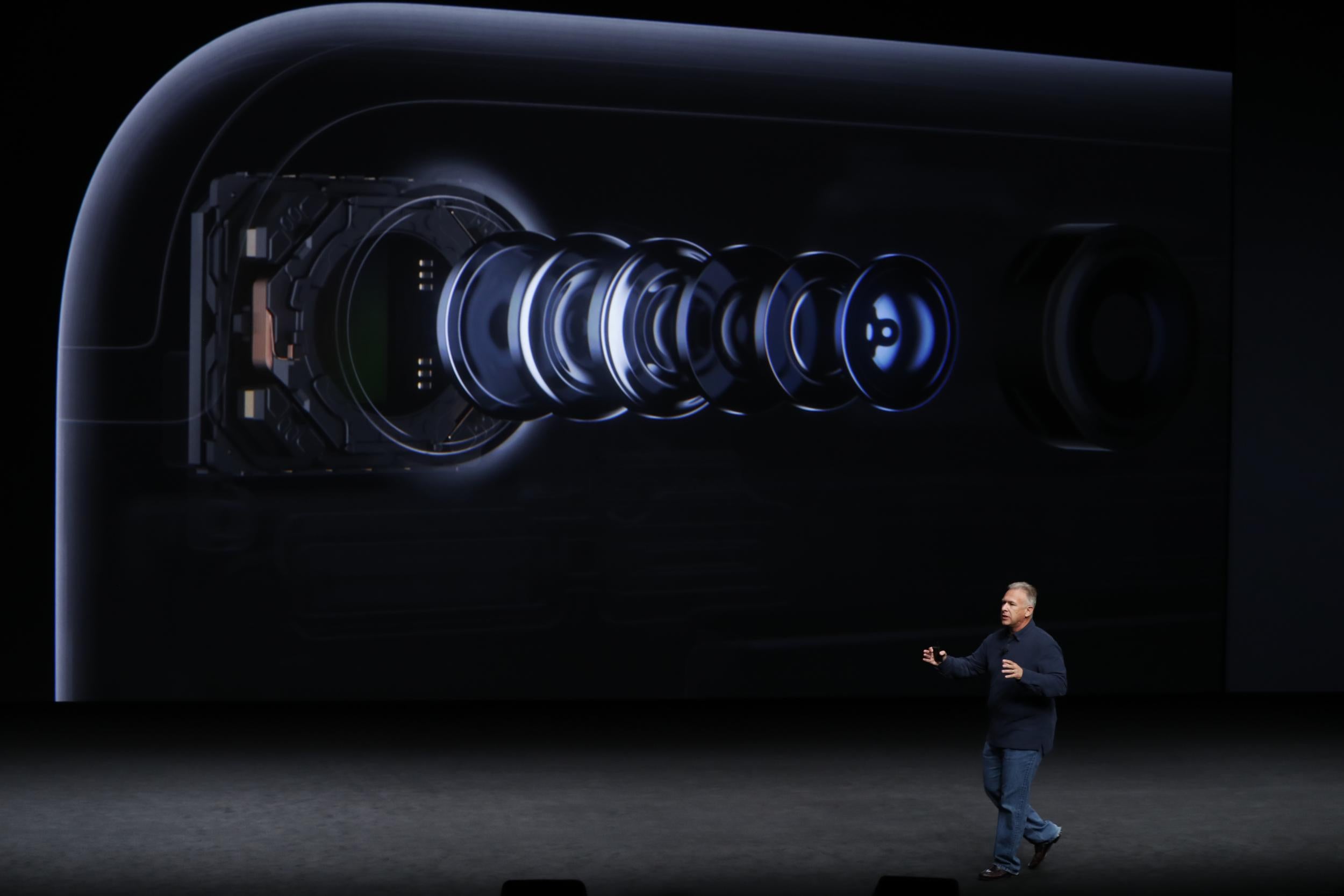

The twin 12 megapixel cameras on the rear of the iPhone 7 Plus are the result of Apple’s canny purchase of camera module manufacturer Linx in 2015. Building on Linx’s technology, Apple has incorporated a wide angle 28mm lens and a telephoto 56mm lens into its phones. The camera software uses either or both the dual cameras to provide the best quality images, while making this process transparent through the iOS touch interface, which offers live movement between wide angle (1x), telephoto (2x), and software zoom now up to 10x.

Building stereoscopic scenes

As appealing as this may be, adding a second rear camera offers a much more interesting set of possibilities. Having two slightly different viewpoints means live images can be processed for depth information per pixel captured, so that images gain an extra dimension of depth data. Since the distance between the two cameras is known, software can make triangulation calculations in real time to determine the distance to corresponding points in the two images. In fact our own brains do something similar called stereopsis so that we’re able to view the world in three dimensions.

The iPhone uses machine learning algorithms to scan objects within a scene, building up a real-time 3D depth map of the terrain and objects. Currently, the iPhone uses this to separate the background from the foreground, in order to selectively focus on foreground objects. This effect of blurring out background details, known as bokeh, is a feature of DLSRs and not readily available on smaller cameras such as those in smartphones. The depth map allows the iPhone to simulate a variable aperture which provides the ability to display areas of the image out of focus. While an enviable addition for smartphone camera users, this is a gimmick compared to what the depth map can really do.

Designing natural interactivity

What Apple has is the first step toward a device like Microsoft’s HoloLens, an augmented reality head-mounted display currently in development. Microsoft found little success with its previous Kinect system, briefly offered as a controller for Xbox games consoles. But for researchers and engineers the Kinect is a remarkable and useful piece of engineering that can be used to interact naturally with computers.

Microsoft is integrating some of the hardware, software, and lessons learned from Kinect into the HoloLens, expanding them with Simultaneous Localisation and Mapping, or SLaM, where the surrounding area is mapped in 3D and the information is used to make graphical overlays onto or within a live video feed.

Software that provides similar analysis of people’s poses and location within a scene for dual camera smartphones, would provide a virtual window onto the real world. Using hand gesture recognition, users could naturally interact with a mixed reality world, with the phone’s accelerometer and GPS data detecting and driving changes to how that world is presented and updated.

There has been speculation that Apple intends to use this in Apple Maps for augmenting real world objects with digital information. Other uses will come as third party manufacturers and app designers link their physical products to social media, shopping and payment opportunities available through a smartphone.

Apple has not arrived here by accident. In addition to acquiring Linx, Apple also purchased augmented reality pioneer Metaio in 2015, suggesting a game plan to develop a mixed reality platform. Metaio was working not just on augmented reality software but also on a mobile hardware chipset that would run AR much faster.

Most tellingly, Apple acquired PrimeSense in 2013. If the name doesn’t sound familiar, PrimeSense is the Israeli firm that licensed their 3D-Sensing technology to Microsoft so it could develop … the Kinect. Adding Apple’s focus on social networking into the mix, augmented reality offers the opportunity to build a messaging system with telepresence – holographic or AR representations of distant conversation partners – or a Facetime videoconferencing service with digitised backgrounds and characters. Soon, it might not just be Pokémon that we’re chasing on our phones.

Jeffrey Ferguson is lecturer in pervasive and mobile computing at the University of Westminster

This first appeared in The Conversation (theconversation.com)

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks