Minority Report-style technology arrives: Introducing hands-free touch-screen 'ultrahaptics'

Bristol researchers debut touch-screen computers you don't have to touch

It goes by the decidedly unsexy name ‘ultrahaptics’, but a new technological prototype announced by researchers from the University of Bristol is reminiscent of the gloves Tom Cruise uses in sci-fi classic “Minority Report” – and is nothing short of astonishing.

The device is essentially a touchscreen so sensitive that a user doesn’t actually need to touch it. Instead, it is manipulated via the vibrations from soundwaves alone – and feedback from it can be ‘felt’ in mid air.

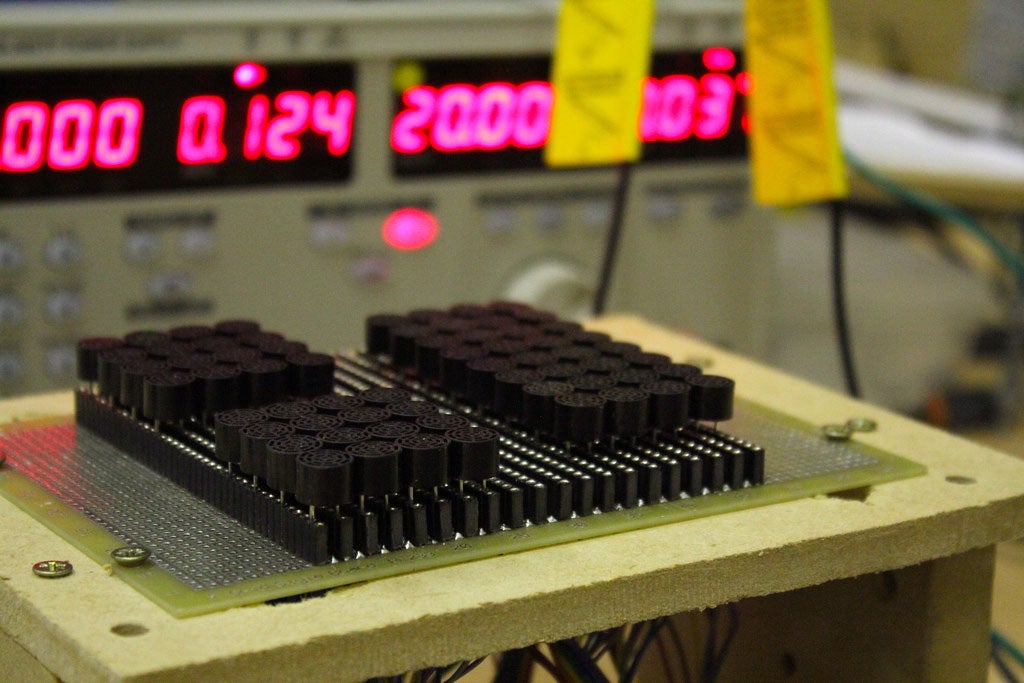

Ultrahaptics uses an array of tiny speakers – called ‘ultrasonic transducers’ – which use waves of ultrasound to create vibrations that can be felt at quite precise points in mid-air. And now engineers at the Bristol Interaction and Graphics group have found a way to beam the signals through a screen, creating an invisible layer of vibrations above it and allowing it to be used as a computer interface.

Haptic feedback is something you’ll be familiar with from smartphones and game controllers. It’s what happens when you press a virtual button on a smartphone screen, for instance, touch-sense feedback that tells you when you’ve done something.

However, this new technology represents something of a breakthrough in the field, which has always previously required physical contact with the device feeding back.

Tom Carter, a PhD student with the BIG research group, told The Engineer that there were plenty of possible applications for the new technology, including in things like in-car sat-navs for drivers who don’t want to take their eyes off the road.

"Even if you provide [haptic] feedback on a touch screen you have to fumble around pressing all the buttons, whereas with our system you can wave your hand vaguely in the air and you’ll get the feeling on the hand,” he said.

“We can give different points of feeling at the same time that feel different so you can assign a meaning to them. So the “play” button feels different from the “volume” button and you can feel them just by waving your hand.”

It works in a quite simple fashion. Waves of ultrasound are projected above the screen and displace the air, creating a pressure difference. This is called acoustic radiation pressure. By focussing ultrasound waves at a specific point in mid-air, a noticeable pressure difference is created. Then the device creates a focal point by triggering the ultrasound tranducers with specific phase delays so that all sound waves arrive at the point concurrently.

We perceive the waves as vibration on the skin, and by varying the modulation frequency or pulsing the feedback, different 'textures' can be created. And by giving each feedback point a different modulation frequency, different feedback is produced, allowing different textures to be applied to the user at the same time, potentially allowing for a range of mid-air sensations.

The technology is still in its infancy, but researchers are developing ideas, including mid-air gestures, and layers of tactile information hovering over displays. If all goes to plan, it could usher in the kind of technology used by Tom Cruise’s character in the film “Minority Report”, in which he’s seen manipulating complex layers of crime-scene information on glass at a distance, using special gloves.

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks