Google and Stanford unveil new machine vision technology that recognizes complex scenes

It's the difference between 'the cat' and 'the cat sat on the mat' - but one day CCTV might be able to not only recognize individuals but behaviour

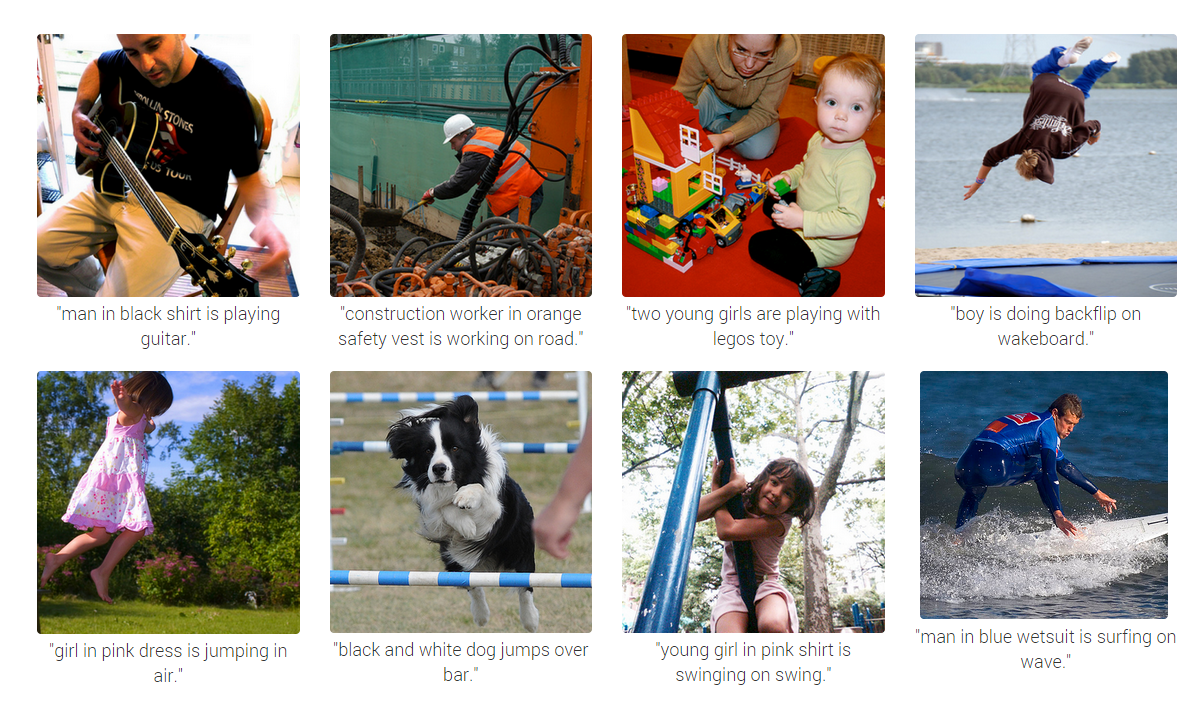

Researchers have unveiled a step forward in artificial intelligence that allows computers to describe the content of photos and videos with a greater accuracy than ever before – occasionally even aping human comprehension.

Researchers from Google and Stanford University in the US independently created software that does not only recognize individual objects (the usual target of machine vision) but complex scenes, involving multiple individuals, objects and activities.

While the software could be initially used to help catalogue online content (Google’s mission statement is still to “organize the world’s information”) robots in the future might use the algorithms to safely navigate real world environments.

However, the research also has implications for surveillance and could allow CCTV to not only identify individuals but also what they’re doing – spotting the difference, for example, between pedestrians walking down a street and people vandalising the same area.

The system works by combining two neural networks (connected computers that can ‘learn’ to spot patterns in data), one of which recognizes individual elements with an image and another that uses natural language processing to turn this into a cohesive description.

“A picture may be worth a thousand words, but sometimes it’s the words that are most useful - so it’s important we figure out ways to translate from images to words automatically and accurately,” wrote Google in a blog post announcing the new research.

However, the technology is still prone to mistakes and some machine learning specialist note that the capacity is still far from “understanding” in the sense we use for human comprehension.

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks