James Lovelock: We need lone scientists

The creator of the Gaia Theory has also been a prolific inventor. Now in his 95th year, James Lovelock mourns the passing of the golden age of solitary scientific genius

It was an article by the journalist-philosopher Jonah Lehrer in the Wall Street Journal in 2011 that made me think that intelligence, pure intellect, might not be all that it was cracked up to be.

Lehrer argued that the days of the lone scientist were over and that, if the equals of the great individual scientists of the past – Galileo, Newton, Leibniz, Darwin and Einstein – appeared today, they would find no place in the modern world of science. Science, he wrote, was now so complex and expensive that only governments and large corporations could afford to support it. Successful science, he seemed to imply, no longer came from the lone conquest of a scientific Everest. The modern world demanded the contest of hugely expensive teams in the science equivalent of an Olympic stadium.

My first instinctive thought was that this was dangerous nonsense. Great brains could function now as well as or better than in earlier times. But then I realised that he was at least partly right. When I started my practice as a lone scientist- inventor in 1961, the bureaucratic restrictions were mild and easy to overcome, but now, more than 50 years later, they are formidable.

In most nations of the developed world, they rule out the greater and more interesting parts of hands-on science. True, it might be possible for a present-day Descartes, Einstein or Newton to think and use paper or a PC to record and expand their thoughts, but a Faraday or a Darwin would be buried in paperwork and obliged to spend their time solving problems concerning health and safety, and political correctness, today's equivalent of the theocratic oppression of Galileo. In the world of corporate science there would be little time left for their singular and breathtaking ideas.

More than that, the internet has made the human world a monstrous village with an ever-growing population of nags, scolds and officious fools; soon, I fear, we face a life like that Japanese nightmare – the one that sees an outstanding brain as like a nail that stands out and is always hammered in.

For the past 40 years, I have worked alone in my own laboratory but as part of a rich life within a family and a village community. It is a mistake to regard a lone scientist as an unnatural or pathologically disabled person; I do not think that I was disabled or even lonely. What I mean by a lone scientist is one who is autarkic and does not need immersion in a think-tank to excite ideas, which arise naturally through wondering. It would be easy for me to be a lone scientist within a good university department or scientific institute, provided that social, tribal and bureaucratic influences were minimal. You can easily distinguish lone from communal scientists by the authorship of their papers. The true loners write alone or with one, or rarely two, colleagues.

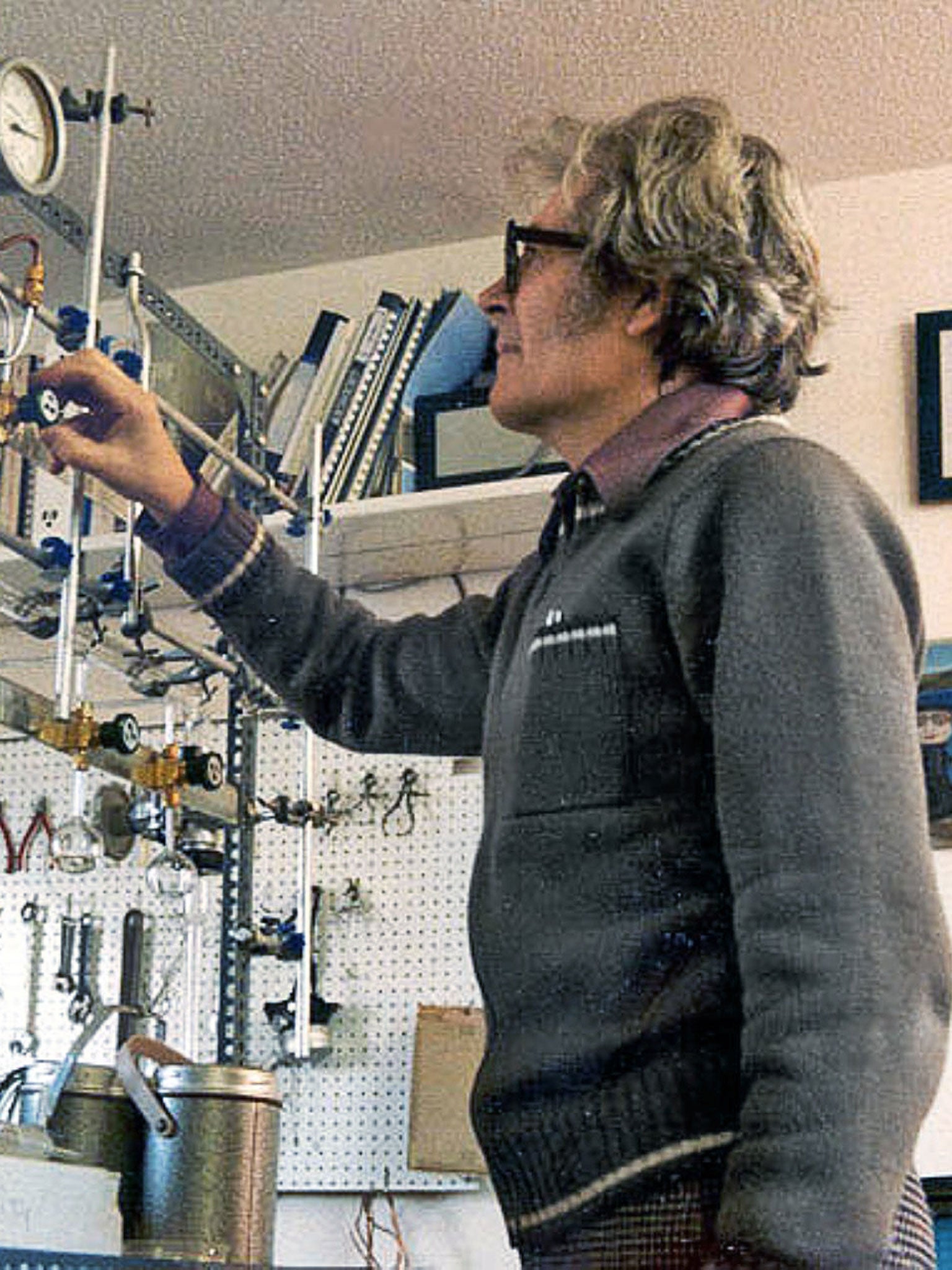

Everyone thinks they know what a scientist is and how she or he works, in a serious laboratory where white-coated scientists conduct their experiments.But this photograph illustrates my home laboratory in 1981, where I was about equally engaged in invention as in science. Note that I was not white-coated: I never felt the need to wear a uniform. I merely wore old clothes that could be discarded if contaminated.

My laboratory in no way resembled the work environment of a senior scientist in today's world. He or she works from an office and has a sizeable staff to perform practical experiments or plan expeditions to observe the Earth's near and far environment. More and more, the exciting and slightly dangerous experiments with chemicals, high voltages and radioactive substances are done by computer simulations. From my viewpoint, science lost its glamour about 30 years ago. No doubt the few surviving dinosaurs 60 million years ago felt the same about the safer mammalian world that was thrust upon them. Those in the arts know well the delights of hand and eye creativity and the true freedom it brings, but it is now so rarely found in science that I feel an urgent need to recommend at least the trial of the lone practice of science, if only because it is a way to break the disciplinary integuments that stifle scientists and science itself.

I have always, from childhood on, regarded science as a calling, a vocation, never as a career. For this reason, I chose employment as a laboratory assistant in the late 1930s to learn the craftsmanship of science; the next 23 years I spent doing postgraduate medical research, almost all of it as a tenured staff member of the National Institute for Medical Research (NIMR). This was needed to round off my apprenticeship to science as a professional. From 1961 to 1964, I was employed as a research Professor at Baylor College of Medicine in Texas, but during that time I fully developed my vocation as a lone practitioner. I even have the ultimate professional qualification: CChem, a chartered chemist of the Royal Institute of Chemistry.

There is nothing quirky about this way of life, but it does differ from that of most professionals because the bulk of my income went towards the science I did rather than to improve the standard of living enjoyed by me and my wife. I aimed towards maximum economy and saw no point in buying expensive equipment, because I knew that such apparatus (even when said to be the latest) was probably 10 years out of date. I knew as an experienced inventor of scientific instruments that it takes many years to develop the working model of an idea into practical, saleable hardware. I could invent new equipment that was truly at the leading edge, so why did I need to buy what was already outdated?

I have never kept count of the many inventions I made, but it must run into the hundreds. Most of them were trivial, such as a wax pencil that would write clearly on cold wet glassware straight from a refrigerator. It was published as one of my first letters to Nature in 1945. Although I am not the formal inventor with a patent, I am fairly sure that I was the first to make a microwave oven powered by a one kilowatt magnetron. It was practical and was used almost daily for several months to reanimate chilled small animals and to cook my lunch at the National Institute for Medical Research in the 1950s.

If there was something very complex that I could not easily make, such as an electron microscope or a new form of mass spectrometer, I considered solving my problem another way, or sought a friend who could sell or donate spare time on his instrument, or could do the job for me. The one exception was the purchase of the best computer I could afford. It so increased my productivity that the cost was justified.

When I look back, I am surprised by how often inventions stole into my brain when someone entered my room and asked: "Can you think of a way to do...?" An example easy to recall is the sudden appearance at the entrance of my lab in wartime London in 1943 of my boss, the physiologist RB Bourdillon. He said: "Lovelock, can you make for me an instrument that will measure heat radiation accurately and record if the heat flux was enough to cause a first-, a second- or a third-degree burn on bare, exposed skin? I need it by 10 tomorrow morning for an important meeting at the War Office." It was then about 4pm.

From the expression of the need to the product took about four hours of thought and experimental test in 1943. I suspect that posing the same need now to a civil service laboratory would provide several months' work for a team of scientists and technicians. The crux is need. In 1943, it was the solution to an urgent real-life problem; now the need would be the sustenance of employment, expansion and preferment.

Invention, science and war have always been tightly connected. Newton's laws of motion were born from the needs of the British Navy, and Darwin travelled on his amazing journey of discovery as a naturalist employed on the naval ship The Beagle.

Humanity and science were offered a cornucopia of benefits from the accelerated inventions of the Second World War. Had we been less combative animals, we could have used this new knowledge constructively. We could have made the observation of the Earth from space a priority, built satellites that viewed the land, the air and the oceans, and seen the looming dangers of global warming in time; instead, we made space missiles. Such a statement sounds good when claimed with liberal hindsight. But if we think a little more deeply we have to ask, what university department or scientific institute anywhere would have bothered to spend as much as the Manhattan Project did on nuclear energy, or in peacetime thought of using rockets to put satellites in space. It needs the combativeness and the tribal anger of war to undertake such endeavours, and this is the crux of the global warming problem; we do not yet regard it to be as serious as a major war. It may, in fact, be even more serious.

In the 1960s, I worked for Nasa at the Jet Propulsion Laboratory in California and got to know the real rocket scientists, many of whom were not infinitely wise, nor did they all speak with Middle European accents and stand before a blackboard writing cadenzas of incomprehensible equations. Instead, those I met built those wonderful spacecraft that let us know for the first time the nature of our solar system and see the planets almost as sharply as if we were on them ourselves; most were Americans in early middle-age, including outstandingly competent engineers and astrophysicists. Surprisingly, to me, there was a sprinkling of older men who previously had been skilled in building intricate and beautiful things such as tiny yet perfectly working cars, railway engines, clocks and so on. I was comfortable with engineers of this kind, people who understood thermodynamics and could also build exquisitely crafted model railway engines.

My experience at Nasa highlights the interplay between science and engineering. They are like a married couple that has never fallen out of love. Neither one could exist without the other: even a mathematician performing his intricate acts of intuition would be lost without a pen and paper; and who but an engineer could have made them? Where would the biologist be without a microscope? Galileo is remembered as a scientist, but he was first a superb engineer who designed and made a telescope with sufficient resolution to see the satellites of Jupiter.

So, down through history, the wisdom of science accumulated. Lone observers noticed something different and wondered, then patiently waited and checked their observations to confirm that they always linked with the consequences of their prediction. Until about the middle of the 20th century, almost all science began this way; but then, especially in times of war, powerful leaders of governments and business imagined that science could be managed like an army. They were confident that the employment of 100 scientists would achieve far more than one alone. Almost the reverse is true, in fact: even a million reasonably intelligent men or women gathered at the ultimate interdisciplinary conference would rarely, if ever, match an Einstein or a Darwin. Much worse, the funding of that million would leave nothing over to sustain a lone genius.

There were a few other lone scientists when I started to practise in 1964, but before long the numbers declined, until now they are as rare as ectoplasm. Science is now biased against approval or support of them, so that it is difficult for them to succeed. In particular, the recently devised processes of peer review and the funding of science by grant agencies are both prejudiced against outsiders and loners. The few lone scientists now in existence find it almost impossible to publish their work and ideas in approved scientific journals, regardless of its quality.

Without peer-reviewed papers to judge an applicant, funding agencies cannot offer financial support. The lone scientist could be like his archetype, the artist, starving in a garret. That is bad enough, but rejection of the publication of science done by lone scientists too closely resembles the censorship of Galileo when loners are treated as heretics. I fear that, as we move to a communal life in vast cities, the automatic rejection of loners by established teams will be seen as part of our evolutionary history and they may become extinct.

I have been a practising scientist for 70 years and a loner for 56 of them. I am not an impoverished amateur making gadgets in his garage, but professionally qualified in physics, chemistry and non-clinical medicine. In no way is this meant as a polemic arguing for the replacement of teams by lone scientists. Nor is it meant as a denigration of teamwork. The truth is that there are places for large teams as well as for the lone creators. We need them both, and we need them now.

This is an edited extract from 'A Rough Ride to the Future', by James Lovelock (Allen Lane, rrp £16.99 hardback, £11.99 ebook), which is published on 3 April. To buy the hardback for £12.99 free P&P, call 08430 600030 or visit independentbooksdirect.co.uk

‘Unlocking Lovelock: Scientist, Inventor, Maverick’, a free exhibition celebrating the life and career of James Lovelock, opens at the Science Museum on 9 April

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks