AI could ‘kill many humans’ within two years, warns Sunak adviser

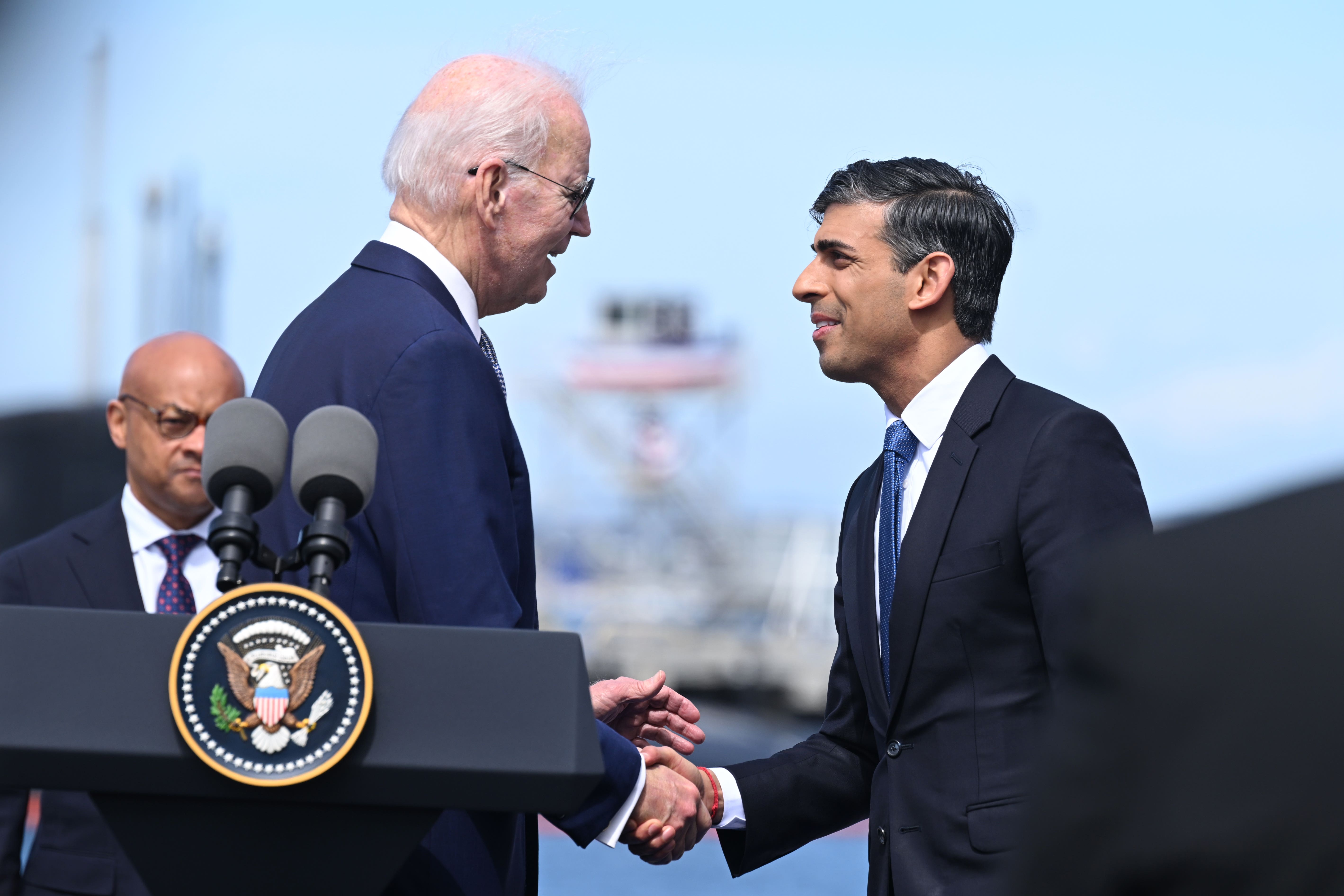

Stark warning comes as Rishi Sunak prepares to persuade Joe Biden of ‘grand plan’ to control artificial intelligence

Artificial intelligence (AI) could create a “dystopia” a government minister has warned after Rishi Sunak’s adviser on the technology said it could become powerful enough to “kill many humans” in only two years’ time.

Matt Clifford said even short-term risks were “pretty scary”, with AI having the potential to create cyber and biological weapons that could inflict many deaths. It comes as Mr Sunak heads to the US, where he is set to try to persuade president Joe Biden of his “grand plan” for the UK to be at the centre of international AI regulation.

The PM wants Britain to host a watchdog for AI similar to the International Atomic Energy Agency, and will also propose a new international research body.

Paul Scully, minster for tech and digital economy, told the TechUK Tech Policy Leadership Conference on Tuesday that there should not solely be a focus on a “Terminator-style scenario”.

He said: “If we get it wrong, there is a dystopian point of view that we can follow here. There’s also a utopian point of view. Both can be possible.

“If you’re only talking about the end of humanity because of some, rogue, Terminator-style scenario, you’re going to miss out on all of the good that AI is already functioning - how it’s mapping proteins to help us with medical research, how it’s helping us with climate change.

“All of those things it’s already doing and will only get better at doing.

“We have to take breathing space to make sure we’re getting this right for the whole of society, as well as the benefit of the sector.”Mr Clifford said that that unless AI producers are regulated on a global scale then there could be “very powerful” systems that humans could struggle to control.

Mr Clifford’s intervention come after a letter backed by dozens of top experts – including many of the pioneers of AI – warned that the risks of the technology should be treated with the same urgency as pandemics or nuclear war.

Senior bosses at Google DeepMind and Anthropic signed the letter along with the so-called “godfather of AI”, Geoffrey Hinton. Mr Hinton resigned from his job at Google earlier this month – warning that in the wrong hands, AI could spell the end of humanity.

Mr Clifford is advising Mr Sunak on the development of a government taskforce which is looking into AI language models such as ChatGPT and Google Bard, and is also chairman of the Advanced Research and Invention Agency (Aria).

He told TalkTV: “The near-term risks are actually pretty scary. You can use AI today to create new recipes for bio weapons or to launch large-scale cyber attacks. These are bad things.”

The No 10 adviser added: “The kind of existential risk that I think the letter writers were talking about is ... about what happens once we effectively create a new species, an intelligence that is greater than humans.”

While conceding that a two-year timescale for computers to surpass human intelligence was at the “bullish end of the spectrum”, Mr Clifford said AI systems were becoming “more and more capable at an ever increasing rate”.

Asked what percentage chance he would give that humanity could be wiped out by AI, Mr Clifford said: “I think it is not zero.”

He continued: “If we go back to things like the bio weapons or cyber [attacks], you can have really very dangerous threats to humans that could kill many humans – not all humans – simply from where we would expect models to be in two years’ time.

“I think the thing to focus on now is how do we make sure that we know how to control these models because right now we don’t.”

AI apps have gone viral online, with users posting fake images of celebrities and politicians, and students using ChatGPT and other “language learning models” to generate university-grade essays.

But AI can also perform life-saving tasks, such as algorithms analysing medical images such as X-rays, scans and ultrasounds, helping doctors to identify and diagnose diseases such as cancer and heart conditions more accurately and quickly.

Mr Clifford said that AI, if harnessed in the right way, could be a force for good. “You can imagine AI curing diseases, making the economy more productive, helping us get to a carbon neutral economy,” he said.

As well as a new global regulator based in London, Mr Sunak is keen for the UK to host an international AI summit this autumn, according to Politico.

The prime minister will also suggest to Mr Biden that a new international research body, similar to the CERN institute that exists for particle physics, is set up for AI.

The PM’s official spokesman said he did not want to “pre-empt” Mr Sunak’s conversation with Mr Biden – but said that the UK could become a global leader on both the new technology and regulatory systems for AI.

“We are not complacent about the potential risks of AI. Equally, it does present significant opportunities for the people of the UK. The UK is looking to lead the way in this space,” said the No 10 spokesman, adding: “You cannot look to proceed with AI without having the right guardrails in place.”

Former Tory leader William Hague said it was in both countries interests to co-operate on stronger oversight. “If the US and UK could work together on such regulation while remaining more agile than contrasting approaches in the EU and China, they could create a transatlantic model of good governance,” he wrote in The Times.

Labour is pushing for ministers to bar technology developers from working on advanaced AI tools unless they have been granted a licence.

Shadow culture secretary Lucy Powell, who is due to speak at TechUK’s conference on AI on Tuesday, told The Guardian that AI should be licensed in a similar way to medicines or nuclear power. “That is the kind of model we should be thinking about,” she said.

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks