Will AI ever understand human emotions?

Robots may match humans in recognising different types of emotions in the next few decades

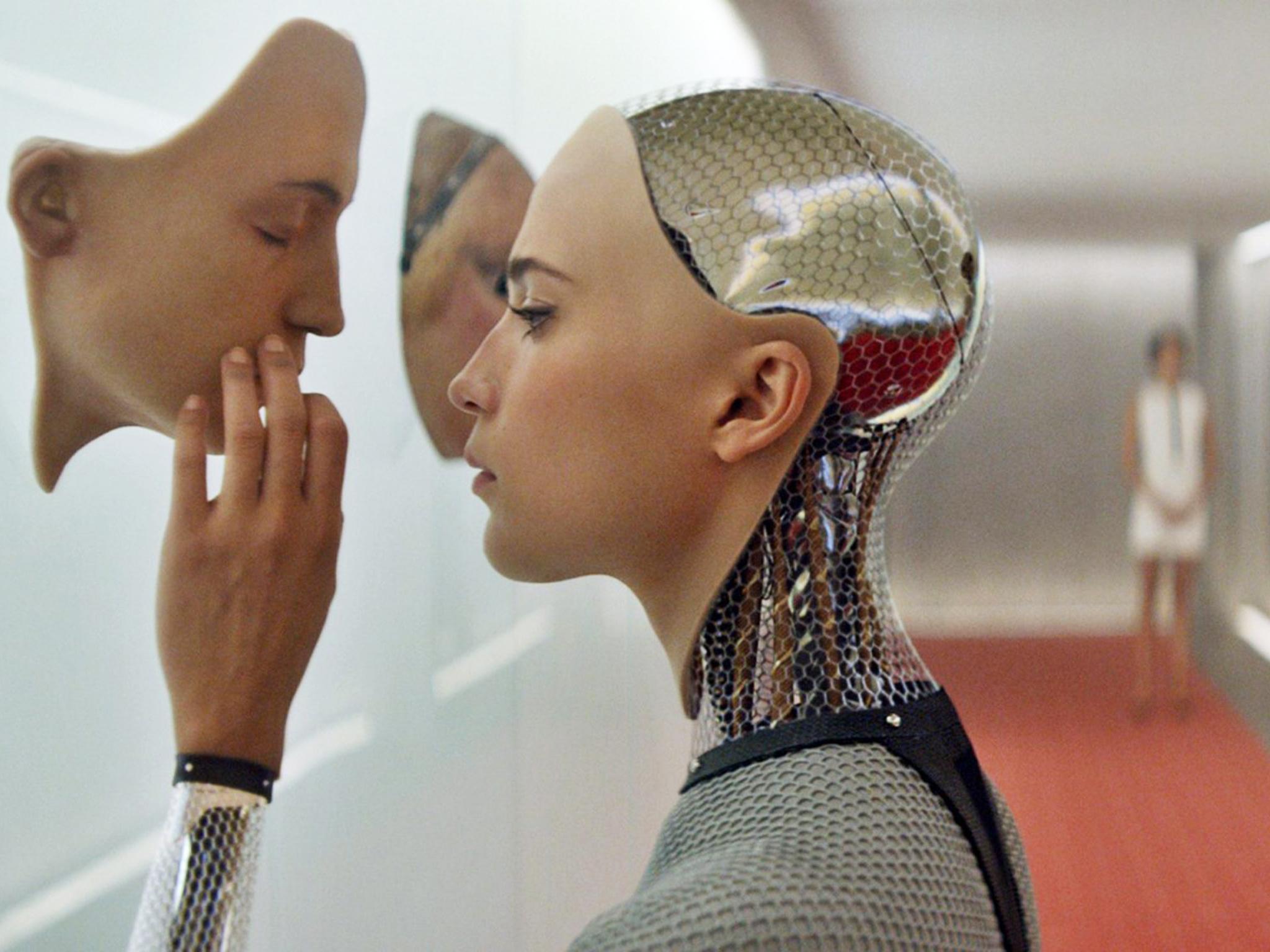

How would you feel about getting therapy from a robot? Emotionally intelligent machines may not be as far away as it seems. Over the last few decades, artificial intelligence (AI) has become increasingly good at reading emotional reactions in humans.

But reading is not the same as understanding. If AIs cannot experience emotions themselves, can they ever truly understand us? And, if not, is there a risk that we ascribe robots properties they don’t have?

The latest generation of AIs have come about thanks to an increase in data available for computers to learn from, as well as their improved processing power. These machines are increasingly competitive in tasks that have always been perceived as human.

AIs can now, among other things, recognise faces, turn face sketches into photos, recognise speech and play Go.

Identifying criminals

Recently, researchers have developed an AI that is able to tell whether a person is a criminal just by looking at their facial features. The system was evaluated using a database of Chinese ID photos and the results are jaw dropping. The AI mistakenly categorised innocents as criminals in only around 6 per cent of the cases, while it was able to successfully identify around 83 per cent of the criminals. This leads to a staggering overall accuracy of almost 90 per cent.

The system is based on an approach called “deep learning”, which has been successful in perceptive tasks such as face recognition. Here, deep learning combined with a “face rotation model” allows the AI to verify whether two facial photos represent the same individual even if the lighting or angle changes between the photos.

Deep learning builds a “neural network”, loosely modelled on the human brain. This is composed of hundreds of thousands of neurons organised in different layers. Each layer transforms the input, for example a facial image, into a higher level of abstraction, such as a set of edges at certain orientations and locations. This automatically emphasises the features that are most relevant to performing a given task.

Given the success of deep learning, it is not surprising that artificial neural networks can distinguish criminals from non-criminals – if there really are facial features that can discriminate between them. The research suggests there are three. One is the angle between the tip of the nose and the corners of the mouth, which was on average 19.6 per cent smaller for criminals. The upper lip curvature was also on average 23.4 per cent larger for criminals while the distance between the inner corners of the eyes was on average 5.6 per cent narrower.

At first glance, this analysis seems to suggest that outdated views that criminals can be identified by physical attributes are not entirely wrong. However, it may not be the full story. It is interesting that two of the most relevant features are related to the lips, which are our most expressive facial features. ID photos such as the ones used in the study are required to have neutral facial expression, but it could be that the AI managed to find hidden emotions in those photos. These may be so minor that humans might have struggled to notice them.

It is difficult to resist the temptation to look at the sample photos displayed in the paper, which is yet to be peer reviewed. Indeed, a careful look reveals a slight smile in the photos of non-criminals – see for yourself. But only a few sample photos are available so we cannot generalise our conclusions to the whole database.

The power of affective computing

This would not be the first time that a computer was able to recognise human emotions. The so-called field of “affective computing” has been around for several years. It is argued that, if we are to comfortably live and interact with robots, these machines should be able to understand and appropriately react to human emotions. There is much work in the area, and the possibilities are vast.

For example, researchers have used facial analysis to spot struggling students in computer tutoring sessions. The AI was trained to recognise different levels of engagement and frustration, so that the system could know when the students were finding the work too easy or too difficult. This technology could be useful to improve the learning experience in online platforms.

AI has also been used to detect emotions based on the sound of our voice by a company called BeyondVerbal. They have produced software which analyses voice modulation and seeks specific patterns in the way people talk. The company claims to be able to correctly identify emotions with 80 per cent accuracy. In the future, this type of technology might, for instance, help autistic individuals to identify emotions.

Sony is even trying to develop a robot able to form emotional bonds with people. There is not much information about how they intend to achieve that, or what exactly the robot will do. However, they mention that they seek to “integrate hardware and services to provide emotionally compelling experiences”.

An emotionally intelligent AI has several potential benefits, be it to give someone a companion or to help us performing certain tasks – ranging from criminal interrogation to talking therapy.

But there are also ethical problems and risks involved. Is it right to let a patient with dementia rely on an AI companion and believe it has an emotional life when it doesn’t? And can you convict a person based on an AI that classifies them as guilty? Clearly not. Instead, once a system like this is further improved and fully evaluated, a less harmful and potentially helpful use might be to trigger further checks on individuals considered “suspicious” by the AI.

So what should we expect from AI going forward? Subjective topics such as emotions and sentiment are still difficult for AI to learn, partly because the AI may not have access to enough good data to analyse them objectively. For instance, could AI ever understand sarcasm? A given sentence may be sarcastic when spoken in one context but not in another.

Yet the amount of data and processing power continues to grow. So, with a few exceptions, AI may well be able to match humans in recognising different types of emotions in the next few decades. But whether an AI could ever experience emotions is a controversial subject. Even if they could, there may certainly be emotions they could never experience – making it difficult to ever truly understand them.

Leandro Minku, lecturer in computer science, University of Leicester. This article first appeared on The Conversation (theconversation.com)

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks